On one hand, GPUs expose broad functionality for graphics and machine learning workloads, on the other hand, this functionality may be exploited due to large amounts of unvetted code, complex functionality, and the information gap between user-space application, kernel, and the auxiliary GPU. We introduce a novel framework that allows repurposing of WebGL security checks from the Chrome browser to protect the Android kernel against active exploitation from malicious apps at low performance overhead.

This post discusses the open-source release of our CCS'18 Milkomeda paper. This is joint work between Zhihao Yao, Saeed Mirzamohammadi, Ardalan Amiri Sani, and Mathias Payer.

New usage scenarios result in new threats

With the rise of machine learning workloads and modern games that require powerful computation, GPUs have become massively parallel computing co-processors that expose a complex and versatile interface. This complex interface enables the flexibility and performance required by modern workloads but increases the attack surface. Current operating systems do not enforce scheduling and privilege separation between different GPU workloads. The operating system simply exposes the interface to user-space programs, enabling them to use the vast functionality of the hardware at low overhead.

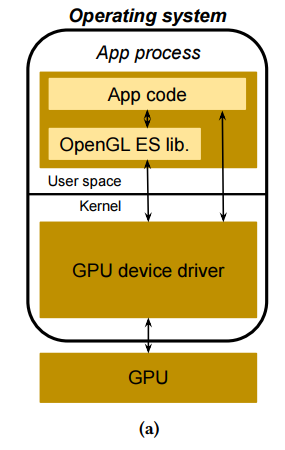

The intended usage scenario for GPUs is a user-space library that provides access to GPU functionality that then calls into the kernel driver which forwards the data to the GPU itself where the computation is executed. This scenario exposes bugs at three different locations. First, the user-space library may be buggy, crashing the calling process. Second, the kernel driver may be buggy, crashing the kernel (and all processes). Third, the code running on the GPU may be buggy, crashing the GPU (and all kernels currently running on the GPU). It's interesting to note that a user-space program is not restricted to the functionality exported by the user-space library but the (often only partially documented) functionality of the kernel driver.

The original threat model focused on local applications either running gaming workloads or machine learning workloads. These trusted workloads may crash if they are programmed incorrectly but there was no focus on a security angle. With the rise of graphics functionality in browsers, the threat model is changing. The exposed threat surface of the GPU kernel interface is exploited through several attacks against, e.g., Google Chrome where bugs in the GPU render process allow further privilege escalation.

WebGL: exposing ioctl to JavaScript

Recently, WebGL enables untrusted websites to access the OpenGL interface through JavaScript. While this is great news for JavaScript programmers that want to program 3D workloads, this is terrible news for security as a highly complex interface is now exposed to untrusted code.

("This is fine" comic by KC Green.)

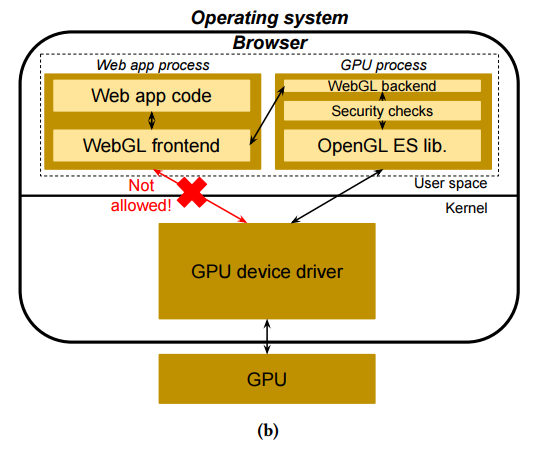

Given the uncertain provenance of the code and the large amount of security vulnerabilities in user-space libraries and kernel drivers, the Google Chrome team deployed a safety net: an interposition layer that checks every GPU call before it is sent to the GPU. A local shim library forwards the GPU call to a separate process where the GPU state is replicated and the call is verified given the current GPU state. After passing the checks, the call is forwarded by the separate process to the GPU. While this adds some overhead due to the inter-process communication overhead (and the checks), it protects the kernel and the GPU from unvetted calls.

The figure above shows the Chrome WebGL security checks. WebGL calls are sent to the secure process where they are checked and then forwarded to the kernel.

These WebGL checks are limited along two dimensions. First, they are restricted to OpenGL calls and do not cover, e.g., the CUDA computational interface (due to both the massive additional complexity and the close-source nature of the computational interfaces). Second, they are incomplete. Due to the almost 1-1 mapping between OpenGL and WebGL, the amount of functionality is massive and checks are therefore reactive. The Chrome developers have added checks for frequently attacked interfaces or interfaces with certain bug patterns. The checks are continuously extended and improved, increasing the guarantees with every release.

Android GPU security

Android is exposed to similar issues as WebGL: untrusted applications ("apps") access the exposed GPU interface either through native libraries (hopefully the ones supplied by the Android systems) but may also access the native ioctl interface directly.

The figure above shows the default Android security stack: OpenGL (and other GPU calls) are never vetted and applications have direct access to the exposed interface.

The only reason why we did not yet see a large amount of local privilege escalation attacks against Android through the GPU interface is the combination of lack of knowledge about this interface and the availability of easier targets. With the deployment of new defenses on Android, the GPU interface will become a prime target.

Milkomeda: reuse checks

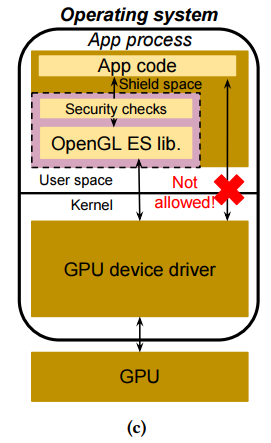

We decouple the GPU interface from user-space processes and force all interactions with the GPU through our interposition layer. The key idea of our system is to automatically reuse the WebGL checks from Chrome, extracting them dynamically and weaving them into our interposition layer. This allows us to reduce the cost of check development. Additionally, new checks will be imported automatically when they are added to Chrome, further reducing maintenance cost.

For WebGL, performance is less critical than for "native" Android applications. We therefore design and implement a safe area in the application process that executes the GPU checks. During regular execution, the safe area remains hidden. Through a call gate that is injected when GPU functionality is accessed, control-flow is transferred to this safe area. All safety critical arguments are copied into the safe area where they are checked. Non-safety critical arguments (as specified by the checks) can remain in the process and the checks can inspect them without additional overhead.

The figure above shows the Milkomeda layout: OpenGL calls are redirected to the safe area where they are vetted and checked before being forwarded to the kernel, protecting the Android system from potentially malicious applications at low performance overhead.

For more technical details and a discussion of design and implementation trade-offs, please refer to the ACM CCS'18 Milkomeda paper. We have also released the full source code of our Milkomeda implementation on GitHub, ready for reproduction of our results as well as future extensions!